What is Jamstack?

Jamstack is a type of architecture that prescribes a particular set of ingredients for your web solution, which drives specific vital characteristics. "JAM" is an acronym that stands for:

- JavaScript

- API

- Markup

The stack part of Jamstack does not specify the use of a particular stack of technology as it is with LAMP stack, for example. Jamstack architecture can be realized with virtually any technology.

Let's start by breaking down the acronym, as it will help us understand the key differentiators.

"M" for Markup#

Let's start with "M," a key ingredient that prescribes that a page markup (HTML/DOM) is generated at build-time instead of run-time. The "build phase" is critical at this point and runs on the build server (typically running node.js) or is managed by a selected hosting environment (e.g., Netlify, Vercel, etc.). This process runs the "build" process - which produces compiled application bundle artifacts and the "export" process, which produces the static pages (or markup, or HTML). This process is orchestrated by a Static Site Generator (SSG) or commonly referred to as a "framework". Examples of known frameworks are Gatsby, Next.js, and Nuxt, to name a few.

It's worth mentioning that you can often control which pages need to be statically generated and those that should be rendered dynamically, typically via serverless functions.

The ability to generate a static markup is a critical characteristic; this guarantees a given page rendered once , allowing it to be served to the end-user being pre-baked instead of being generated on demand for every visitor.

"A" for API#

APIs enrich your website/web app functionality with the stuff you cannot do at build-time, such as commerce, payment, fetching dynamic data based on user behavior, executing a search, retrieving auth context, retrieving protected content, etc.

There is an API for practically anything these days, and the Jamstack ecosystem is rich with powerful API-centric services. That being said, API can also mean accessing your existing, including legacy, APIs from the front-end.

Many existing APIs are not built to be called from the browser safely. Jamstack-oriented platforms, such as Netlify and Vercel, provide the ability to deploy serverless functions that can act as a proxy to the legacy API or leverage CDN-based rewrite functionality to expose some APIs.

"J" for JavaScript#

JavaScript runs the web, as we know. When it comes to Jamstack, the role of JavaScript can be twofold (depending on the Static Site Generator / Framework of choice).

If a JavaScript-based Static Site Generators is used (Next.js, Nuxt, Gatsby, etc.) JavaScript is what you build your presentation layer in (using React, or Vue, or Svelte as the UI library, for example). This means that JavaScript also typically runs during the rehydration phase, and enables ongoing client-side rendering during page / route changes. This allows using single technology (JavaScript) for both server-side (build-time) rendering and client-side rendering.

In some cases, it may make sense to disable the client-side part of JS that SSG adds to the page, thus leveraging the Static Site Generator only as the pre-rendering mechanism. With Uniform, this technique is often used with Sitecore MVC sites.

Independently of the SSG of choice, JavaScript is used to used to enhance the pre-rendered page, make it interactive and dynamic. One use case where this comes in handy is during data fetching client-side during rehydration, allowing to enriching your pre-rendered page contents and functionality with dynamic data.

How it Works Logically#

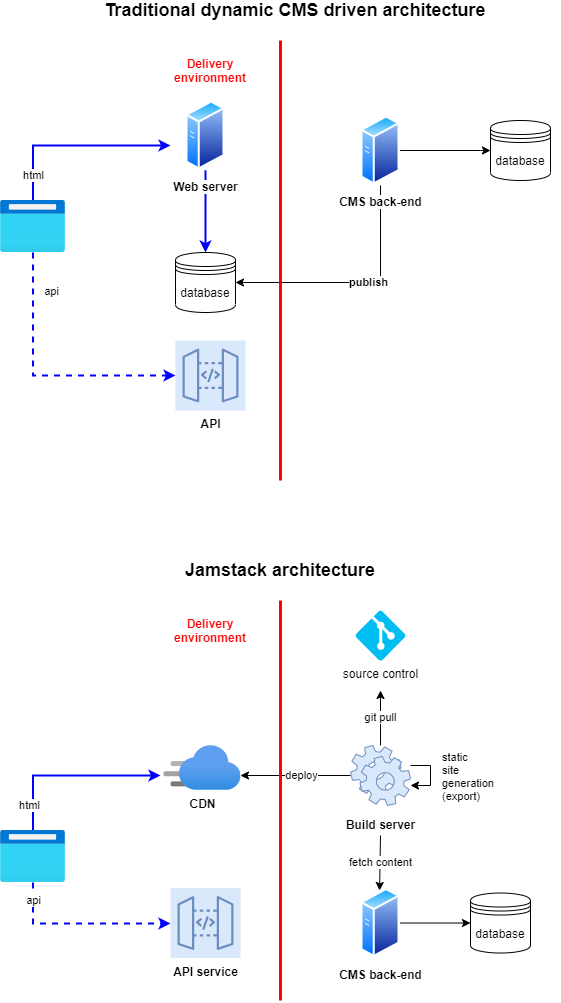

Let's consider both the traditional monolithic dynamic CMS (not a headless CMS) and the Jamstack approach.

The primary differentiation in Jamstack architecture is the set of components responsible for page assembly, in contrast with the traditional dynamic CMS architecture where the page rendering occurs on every request, even if caching is involved. Traditionally the approach involves a set of web server instances behind a load balancer for scaling. This is where the app code is deployed together with the CMS runtime and, at least, a database server where the content is retrieved if it is a cache miss in the web server memory.

The Jamstack approach, as you can see above, collapses the content delivery stack into one component - Content Delivery Network. The workaround page baking is done ahead of time by the Build server. The work is hidden away from the visitors who will not pay the price of a cold page render. Having fewer components contributes to many benefits and a reduction in the complexity of the content delivery environment.

Key Benefits#

Performance#

This is a key differentiator of the Jamstack approach, specifically in the server-side performance area of rendering a page. Jamstack enables super low Time to First Byte (TTFB), typically below 50ms, and because the job of rendering the page is done behind the scenes (see "M" for Markup above). Jamstack sites are delightful to use since they are loading instantly and independently from your web server or database.

Scale#

It's nice to render a page fast for a few visitors, but wouldn't it be even nicer to be able to sustain great performance virtually any load, across the globe? Since the pre-baked pages are served from a global Content Delivery Network, it's possible to achieve such low TTFB across the globe and at virtually any scale. CDNs being elastic in nature play a crucial role in traffic spikes, while autoscaling of heavy monolithic backends typically takes time (sometimes long minutes to spin up and warm-up).

Cost#

A subjective concern is the idea of cost-saving; generally, with Jamstack, you will find you are saving when it comes to infrastructure. This is especially true compared to PaaS on a larger scale for global multi-tenant solutions where the only way to scale is to add more web worker instances, scale-up the SQL database, and geo-replicate the environments in other regions to reduce TTFB for the users in that area. All of which is often cost-prohibitive.

Achieve Decoupling and Minimize Platform Lock-in#

Since all static files are decoupled from your CMS web server, you can host your website/web app using practically and cloud provider. All the top providers (e.g., S3, Azure Blob Storage, Akamai Netstorage, Cloudflare Worker Sites) offer a "static file" hosting on top of blob storage. You can host a Jamstack site on-prem using IIS Static Files handler, should you desire to do so.

What is even more exciting is that you can adopt a hybrid approach where the back-end is hosted within your data center or cloud. At the same time, you can host the front-end on next-gen Jamstack centric platforms that will become your new origin for your web pages and static artifacts and run builds and handle deployment to CDN along with cache validation.

Streamlined Operations#

Another useful side-effect in Jamstack is that things like instant rollback and auditing become easy to configure with pre-built markup.

Jamstack also eliminates cold startup times after deployment; all the work, pre-rendering, is done behind the scenes. The end-users never experience long TTFB while the web servers are warming up.

Security#

Security is improved due to the pages being built behind-the-scenes during the build phase. There are no runtime calls to the database or services required for page requests therefore minimizing the attack vector.

Adopting Jamstack at Scale#

If you are reading this, you may be wondering... This is too good to be true! Does it apply to my use case at Acme Corporation? We have thousands of pages, hundreds of sites in tens of languages and we need to serve a global audience. Plus, we don't use any fancy JavaScript Frameworks / Static Site Generators.

One of the highlight examples of using Jamstack at scale is the story of Microsoft Docs, check it out. Now that if docs.microsoft.com can be on Jamstack, it's very likely that your site can be done this way as well.

Here are the key aspects to consider to ensure your Jamstack adoption is a smooth sailing.

- Optimize the Build Phase Performance

This phase is a supercritical element in Jamstack; it is crucial to ensure your build runs as fast as possible.

- Faster build server: While this may seem like an obvious step, it often gets missed. Running your build on an old VM may not be in your best interest.

- Running export phase in parallel: Typically, if your back-end can deliver content fast enough, you can render pages in multiple threads.

- Run concurrent build agents: You can run multiple builds for different sites/teams simultaneously. Some Jamstack-centric platforms make it very easy to gain this capability.

- Leverage the build caching: Re-build of the application code is not needed when a content change needs to go live; skipping the build phase and running the export phase can shave off minutes from the time content is published in CMS and when it appears live on the website.

- Invest in setting up a proper preview environment: Most Static Site Generators can also do Server-Side Rendering, allowing the business users to preview the changes before being published.

- Implement/configure incremental export: In this approach, only the pages changed during the publishing process will be re-generated and re-deployed. This significantly cuts the time between content being published in CMS and when it appears live on the website.

- Education

This touches business user, developer, and DevOps team education. Jamstack can be a departure from the current way of thinking and the expectation may need to be adjusted slightly. The good news is that the benefits are clearly here and once users get this, they don't want to go back. - It's your Jamstack

Jamstack allows you to map your architecture on top of existing infrastructure. You can utilize it as an extension of your existing CI/CD pipeline. If you are looking for a new CDN, you have many options with first-class support. To adopt Jamstack, you do not have to have a mini-revolution within your IT department. If you are already using Azure, for example, you can continue to use it with Jamstack. - Start with Small Wins

While it's easy to get excited about Jamstack and attempt to "Jamstackify" your whole digital presence, it's always best to start with something small. Flex this approach, get comfortable, show significant results, then bite off a bigger piece. Often the most static and visited pages are a great start.